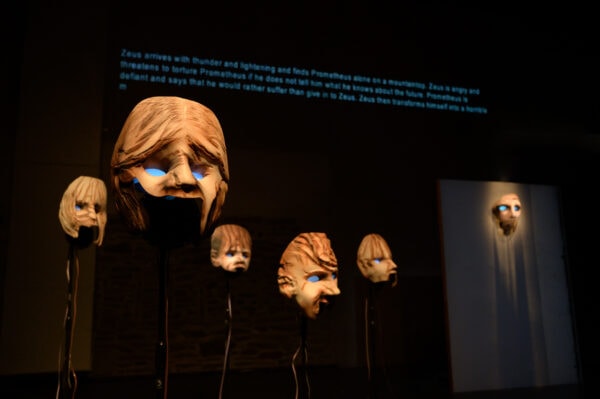

Annie Dorsen’s Prometheus Firebringer (Photo: Johanna Austin)

“I chose these words carefully.” That’s what Annie Dorsen says in her new piece of algorithmic theater, Prometheus Firebringer. But they are not her words exactly.

She performs an essay cobbled together from the quotes of others including Roland Barthes, The Twilight Zone, Susan Sontag, and government hearings. She addresses AI, tragedy, memory, experience, language, choice and knowledge. The citations to each quote are projected behind her as she speaks them.

One sentence and citation, “Contrary to popular decree, there is such a thing as a “bad choice,” from the The Teen Acting Ensemble: A Practical Guide to Doing Theater with Teenagers got a big laugh from the audience.

Between segments of her reading this essay, she hands the reins over to AI. She uses a pre-cursor to ChatGPT, GPT-3, to generate the missing chapters of the Promethean trilogy.

The character of Prometheus and a chorus of orphan children are on stage as masks with eyes that light up. Voice prints from actors are used to recite the text that the algorithm changes with each performance.

We first see Prometheus strapped to a rock (well, he’s a mask on a wall) living out his millennia punishment for stealing fire from the gods and giving it to the human race. He is confronted by the group of orphan children who ask “What will become of us?” Sometimes in rhymes, these characters “speak” to each other.

The children are adrift and say there is “no one to help us dry our tears.” Though the computer rendering of “tears” gets pronounced “tares.”

I’m reminded of the song by Korean pop group BTS, “Tear”, where the artists intentionally play with the linguistic nuances between crying and being torn apart when the group was close to breaking up.

“You’re my tear [teer]” and “You’re my tear [tare]” make for slightly different emotional accusations with just a small adjustment of pronunciation and tone.

But here it makes me think that the AI doesn’t have the capacity to be this intentionally inventive with language or understand the difference. Predictive text is not always right. And why exactly are we trusting machines so much these days? Dorsen is asking us to think about that as well.

Dorsen’s evolving creative use of algorithmic theater has been a subject of fascination for me. In Yesterday/Tomorrow, she had a computer, note by note, find its way from the Beatles’ Yesterday to Tomorrow from Annie and then had human singers perform what the computer wrought. In The Great Outdoors, she took anonymous chatter from the internet and turned it into a galaxy. She has also remixed Hamlet in A Piece of Work. Her creative applications of algorithms has been really exciting and inventive.

But now that I have encountered ChatGPT in my own life, the linguistic stultification, at times, is so apparent in this tool. The text generated can fall into a strange 4th grade book report style of speech with looping empty self-justification. Very much “I’ve made the point because I’ve made the point. And here’s the point which I have made.”

I had to edit some text recently written by ChatGPT and it felt like I was drowning in English-language quicksand and I was struggling to find a way back to inject human expression into the words again.

Similarly, in Prometheus Firebringer, the overwhelming feeling from the interaction between Prometheus and the children is a chasmic lack of empathy.

Prometheus is casually predicting a destructive future, the end of the world, and yet telling the kids to not worry. “Future of despair and misery. Do not be afraid,” he says. But the kids ask “What will become of us when [the end of the world] is here?” He cannot assuage their fears.

Though I was briefly charmed by something approaching accidental creativity from the AI. The children say they are “flies in a bottle” and it was the first moment a vivid image was painted by these voices. Though it was undercut slightly by the AI yanking in some “dust unto dust” from the Bible in the same segment.

But this is Dorsen’s point. We need to look at this use of language. By placing her use of selective text against the machine’s act of predictive text, we see how different the process of sourcing is.

She is creatively sculpting an argument using other people’s words and the placement of them next to each other serves her ultimate point. That point comes from her mind even if it is being expressed with someone else’s words. Her selection reflects her judgment, opinions, creativity, and ideas. And she is wry, funny, and probing in this essay.

The AI is making predictions based on Aeschylean inputs but it cannot match the consistent depth of thought or creativity of Dorsen. There are just constant mismatches in tone and emotion with AI. If it’s funny, it’s not on purpose.

Whatever the AI is sculpting ends up a bit like my middle school ceramics attempts. Not wholly bad, generally resembling the intended object (it’s a bowl I think) but often lopsided. And those bowls were neither utilitarian nor art.

There is also the overall question about what this new technology means for all of us. Dorsen’s essay talks about a man using AI colorizing photos from the victims of the Khmer Rouge AND THEN GIVING THEM SMILES. What the absolute fuck. And if AI learns from these inputs then history gets re-written. So it is in our hands where we go from here. Our bad choices will have future consequences.

Prometheus ponders “Will tech enslave us. Will tech save us.” How we choose to use these tools or rely on them will determine the outcome. Prometheus saw a dark future. Dorsen does not necessarily disagree but she ends her essay with quotes of hope (and some playfulness with the way in which we repeatedly express ourselves using the same exact language).

“He who saves the life of one human, saves all of humanity. Thus it is written in both the Talmud and the Quran. As long as there is time, there is time for care. God willing: “Inshallah.”

But now is the moment to decide how we act.